Pair-programming Superbill with Codex-5.2 and Claude Sonnet 4.6

The Value of Software Development Is (near) Zero

Let me start by saying something that might be seen as provocative.

What if the value of writing software has collapsed to zero?

Not the value of software or SaaS companies. Their death might be greatly exaggerated. They still remain with enormous profit margins and multi-year enterprise contracts.

But the act of writing it manually might very well be.

Something has changed.

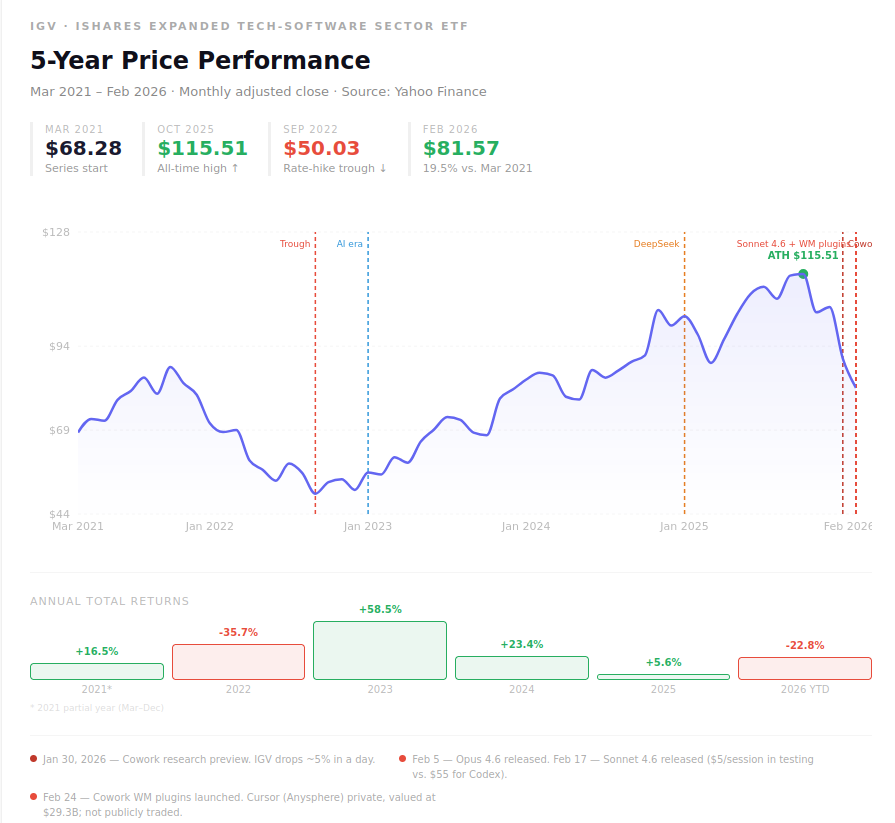

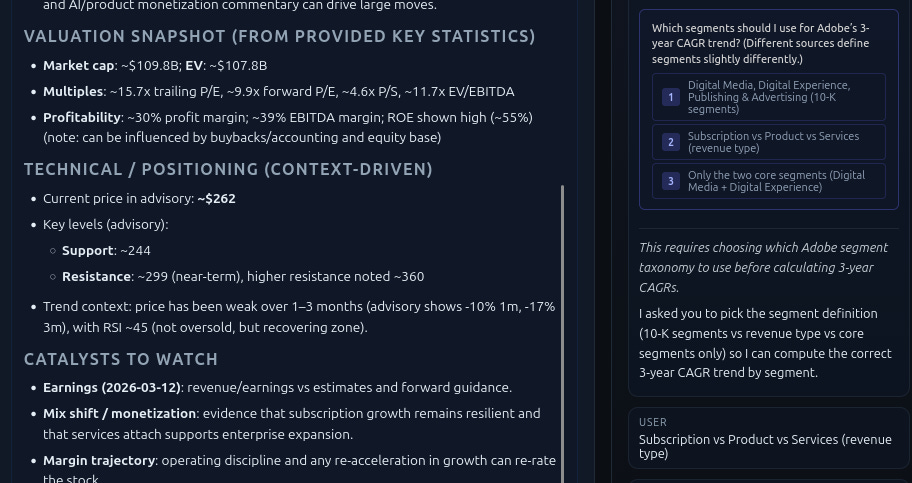

Since the launch of Sonnet 4.6, Cobol to Java, and Wealth Management as a plugin to CoWork, the stock market for many industries in general, and the software application sector in particular, has been in turmoil. Expressed as the value of the IGV ETF, this sector has lost more than 20% in valuation since the beginning of the year. Of course, that is relative to an all-time-high just a couple of days prior.

So? The stock market is fluctuating. Big deal. One might say. In reality, we all know there is more to software companies than just writing software.

Data network effects. Over the last 20 years, SaaS companies have compiled formidable datasets that are extremely hard to replicate. For example, Salesforce knows your customer history. Workday knows your hiring pipeline and org chart. Since, as mentioned, that data compounds over the years, no agent can replicate it from scratch, even with using synthetic data.

Switching costs. I have been building and integrating software for Enterprises for many years. ERP migrations take 3 years and are a lot of pain. Contract negotiations will take years, integration projects months, and training staff and the new software days if not weeks.

Compliance and trust. And then there is the component of regulation. In regulated industries, like Finance, “an AI built this” is not yet a procurement answer. Vendors with SOC2 compliance certifications, FedRAMP access, and audit trails still have the key to the kingdom.

Distribution. Finally, an established position in the market won’t change overnight. Salesforce has 150,000 customers and a direct sales force. That doesn’t disappear when a better tool exists. But it might erode if the service is better somewhere else. And most SaaS companies have been cutting back on customer support recently.

And in my opinion, these companies can even further improve their profit margins using AI tools themselves.

Personal story time. I paid for a QuickBooks subscription for many years that I wanted to cancel after I moved to a different accounting solution (not vibe-coded). When I went to their website to cancel it, I could not login because the 2FA was sending the key to a phone number (my old Singaporean number) I don’t have access to anymore. I also could not file a ticket because the customer support system was behind the login screen. Reading through the customer support sections of the website, posting on socials, and emailing customer support also did not help. So when my credit card expired, I could not give them my new one, hence the payment stopped naturally.

So my hope is that AI might actually help to improve customer service.

But I remain doubtful.

Where coding tools are extremely effective is that they don’t threaten the business model; instead, they will further improve margins. If your product team ships 3x faster at the same headcount, you can run more experiments and add new features much faster and hopefully in higher quality.

Therefore, the incumbents who adopt AI coding tools aggressively could actually emerge stronger, not weaker.

However, there is an emerging micro-SaaS market for boring tasks that may currently be dormant.

My hypothesis is that, for creative minds, every month, the time-lag between “I have an idea” and “I have a working system” shrinks.

I wanted to test that hypothesis.

So in February, I set out to build something non-trivial, something that required architectural decisions, real data pipelines, and meaningful reasoning.

And I wanted to see how much of my cognitive load I could offload to a model.

The project: an Agentic Ambient Intelligence Layer for my personal investment management. A spiritual successor to SuperBill with a pinch of OpenClaw.

My personal artificial investment team.

The tools to help me under evaluation: Codex-5.2 and Claude Sonnet 4.6, both as VS Code plugins.

What I found was a clear and somewhat surprising gap between them.

Claude Cowork for Wealth Management

Before I get into what I built, I should explain what pointed me in this direction.

As mentioned, in early February, Anthropic rattled financial services software stocks with the release of Claude Cowork. Cowork is a desktop agent designed to sit alongside knowledge workers and handle multi-step professional tasks across applications.

The wealth management plugin, in particular, caught my attention.

It’s built to do what advisors spend most of their non-client time doing:

prep for client meetings,

build financial plans,

rebalance portfolios, and

identify tax-loss harvesting opportunities.

What makes Cowork unique in my perspective is not the financial domain knowledge. Rather than using Claude as a separate chatbot, it augments enterprise software tools, pulling context and data without users needing to leave the window they’re working in. That is a huge adoption driver. And something I wrote about here and here.

The wealth management plugin specifically can analyze portfolios, identify drift and tax exposure, and generate rebalancing recommendations at scale — wired into real data through connectors for tools like FactSet (also a SaaS company), MSCI, and others.

Anthropics’s broader financial services suite will go further.

It will include

a financial analysis agent that conducts market and competitor research and handles financial modeling;

an equity research agent that parses earnings transcripts, updates financial models, and drafts research notes; and

a private equity agent that reviews large document sets, models scenarios, and scores opportunities against investment criteria.

CoWork’s plugins, besides connecting with Excel (a must-have), bundle skills, connectors, slash commands, and sub-agents for each workflow.

And they’re designed to be customized to a firm’s own voice, templates, and processes.

Watching this unfold, I had a simple reaction: I want one of these for myself.

Since I had built DeepSearch for Investing in 2022/2023, I figured I would refactor the code base using Codex-5.2 and Claude Sonnet-4.6 through Copilot in VS Code.

The system I wanted to build can be best described as a multi-agent loop with persistent memory, sensory market information that grounds truth through real-time market data.

You might wonder, why is this fool using 2 different coding models?

I think Claude Sonnet in Copilot is really fantastic. At work, I am using Codex. I had some free OpenAI credits and wanted to compare which one I like better.

First, for some design considerations.

Overall Stack

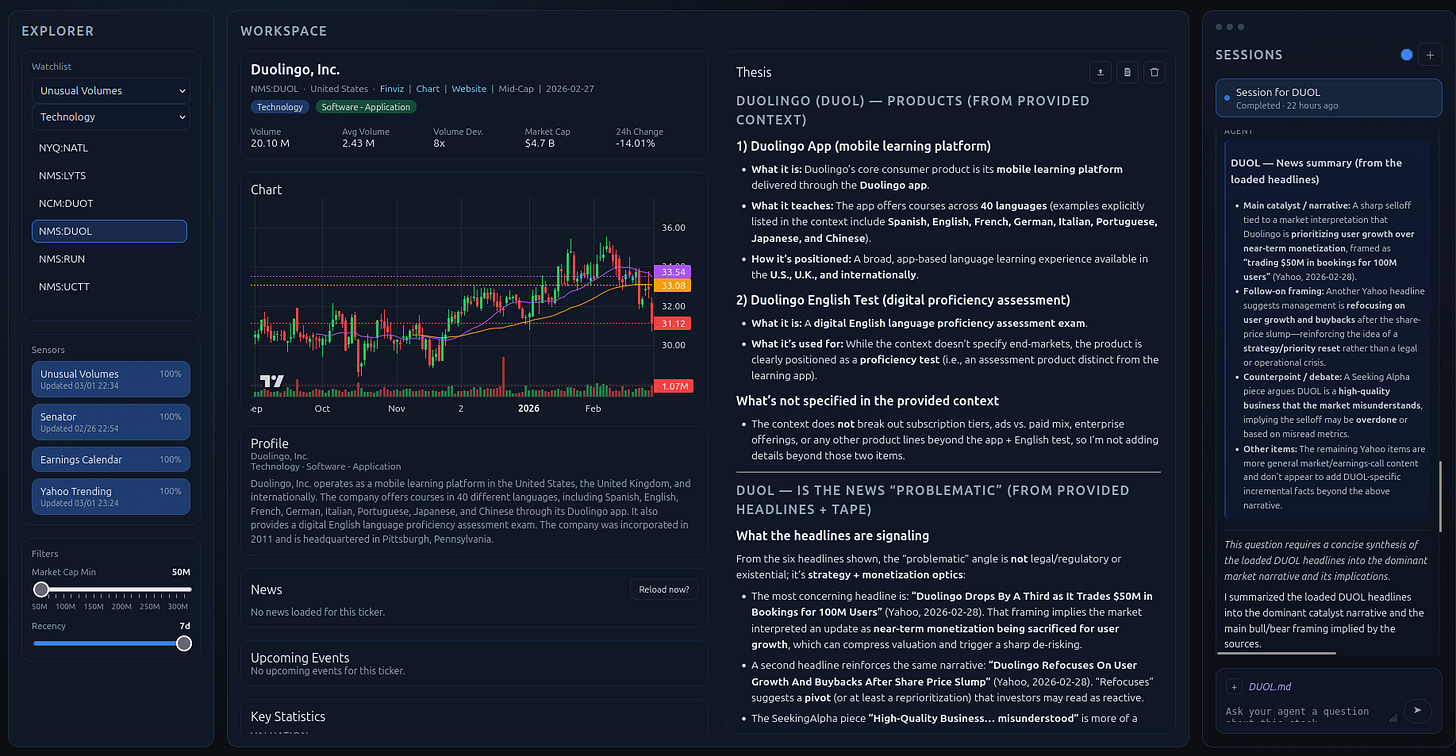

For the UI, I moved away from the limitations of Streamlit / Gradio that are fun for simple projects but don’t work well for more complex solutions. Now at the top sits a React + Vite frontend with three sections: a configuration layer, a workspace for investment data and analytical write-ups, and an agent session so I can talk to agent Superbill in real time.

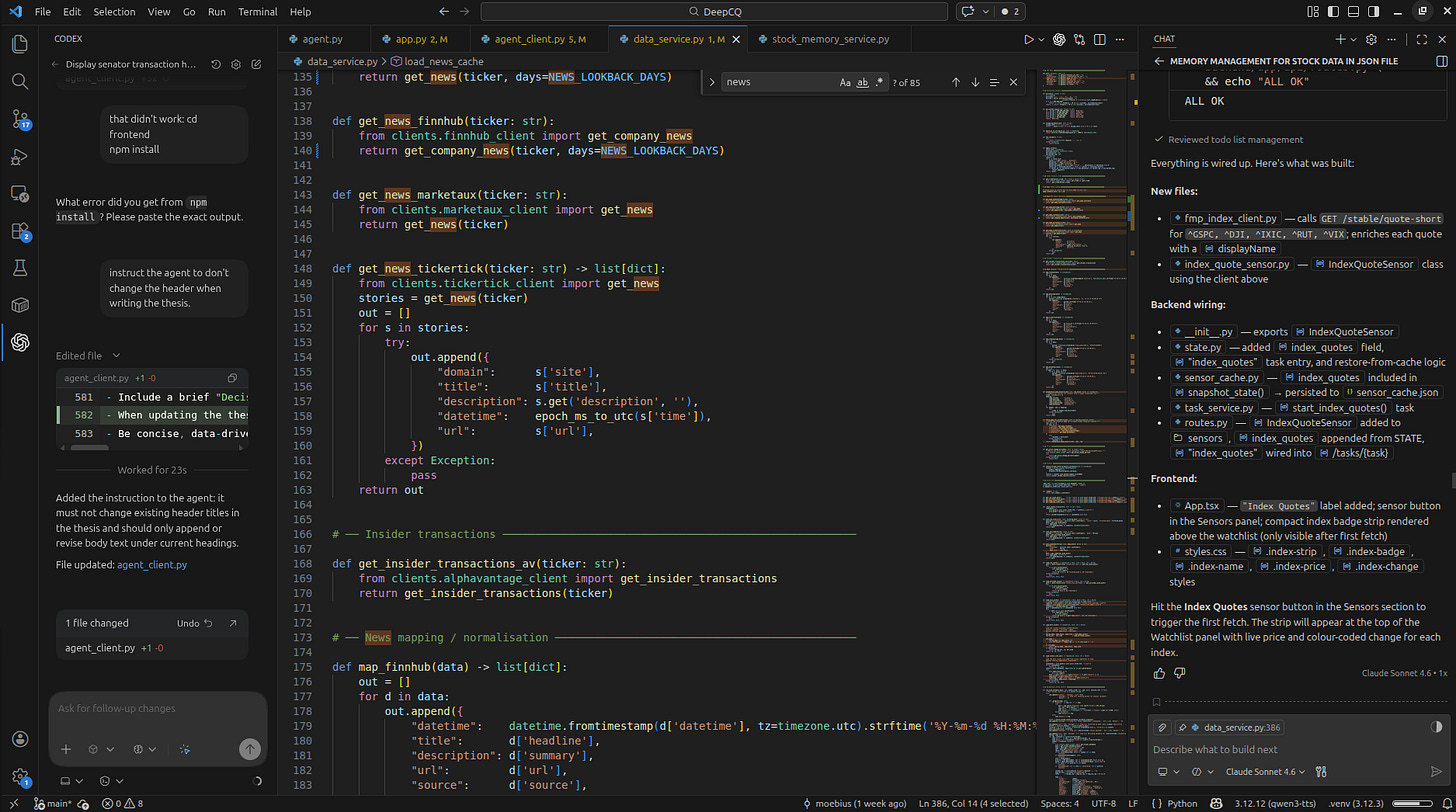

Behind that lives an AI service (port 8001), i.e., the ambient intelligence layer, running an OpenAI Agent SDK with a tool dispatcher and a streamer for live updates from the agent. In the past, I have been building a lot on Smolagents, but I wanted to revisit the OpenAI SDK as almost a year had passed since I last did a pilot on it.

Then, I have a dedicated backend (port 8000) for handling state, scheduling, and sensor orchestration. For a longer-running environment update, i.e., an unusual volume tracker, I want to use an APScheduler. Data flows about the environment come from several sources like Yahoo Finance, FMP, Finnhub, Benzinga, SEC EDGAR, Senate disclosures, and AlphaVantage. Here I could reuse what I already have.

Sensors?

Well, for me, sensors are part of standard agent architectures as they provide information about the environment, helping with embodiment and therefore grounding.

Sensors and Environment

Every intelligent system needs to know what’s happening in its environment. Rather than relying on the user to surface relevant changes, I built a sensor layer that runs continuously in the background.

Four sensors form the core of the environment model:

Unusual Volumes. A long-running task that compares average trading volumes over the observation horizon with the last trading session.

Calendar. A forward-looking sensor that identifies upcoming earnings calls or interesting corporate events.

Senator/Politician. A backward-looking sensor analyzing what stocks politicians with potentially inside information trade.

Yahoo Trending. In the past, I looked more at social sentiment, but I came to realize that this is an extremely noisy signal. And this Yahoo Trending list is very similar in function. So I don’t bother with X or Reddit discussions anymore.

Each sensor writes to a shared sensor_cache that the intelligence layer reads when constructing its reasoning context.

The benefit is that the agent doesn’t need to ask what’s happening in the market.

It already knows.

Memory Architecture

One of the biggest gaps between a useful AI assistant and a genuinely intelligent one is memory. Most LLM applications treat each conversation as a blank slate. And if they have memory its very basic. Probably that’s fine for one-off tasks, but it’s fatal for anything that requires continuity.

I broke memory into three layers, borrowing from my explorations into cognitive science:

Episodic memory. captures what happened. Here I store each session between the agent and an equity. An active_session file logs the current session in real time. A session_archive stores past sessions. The last six conversation turns are injected directly into the system prompt, giving the model genuine short-term context. Markdown is an unexpected gift here.

Semantic memory. captures what is known. Each tracked stock gets a {TICKER}_context, which represents a living thesis document with the current context of the stock as well as a {TICKER}.json for price and news cache. Volume scan results, sensor snapshots, and analyst notes all need to persist across sessions. When I come back after a week away, the system remembers what it thought about a name and why.

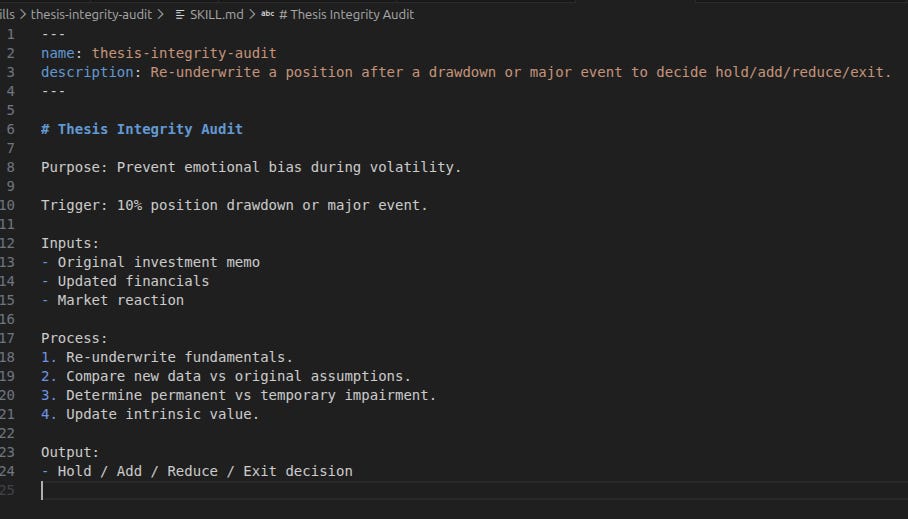

Procedural memory captures how to do things. Anthropic calls these skills, I agree. Essentially, these are SOPs encoded as prompts. I want to implement them as graphs, but this might come next.

Agents, Skills, and Tools

What makes this system genuinely agentic, rather than just a fancy bot, is the combination of tools, memory, skills, and a reasoning loop that connects them.

Tools are the hands of the system, mostly deterministic capabilities for external data retrieval. The agent can autonomously fetch current stock prices, pull recent news, retrieve SEC filings, check insider trades, search earnings calendars, scan for volume anomalies, and fetch arbitrary URLs when needed.

This is really powerful because the agent can call them on demand as the agent decides it needs more information.

Skills are the learned task competencies. I like to call them playbooks.

I gave Superbill fourteen of them, each representing a distinct analytical workflow.

Here are some examples:

Investment Opportunity Triage. Is this worth looking at?

Deep Fundamental Underwriting. What does the business actually look like?

Conviction Calibration Engine. How confident should I be, and why?

Thesis Integrity Audit. Am I fooling myself?

Variant Perception Detection. What does the market believe that I disagree with?

Position Sizing and Concentration. How much capital should this get?

Exit Discipline Engine. When do I sell, and what would change my mind?

Capital Preservation Protocol. What’s the downside scenario?

Daily Portfolio Monitoring. What changed overnight?

Performance Attribution and Error Analysis. Where was I right, and where was I wrong?

Skills are flat files.

So they are loaded into the system prompt and invoked contextually when needed through progressive disclosure.

The agent is fine-tuned to know which playbook applies to a given situation.

The ReAct loop, my trusted friend, ties it all together.

Even though the pattern I’m working toward is Sense → Symbolize → Plan → Act, my neuro-symbolic approach, that goes beyond simple prompt-response.

The agent senses changes in the environment via the sensor layer, symbolizes them into structured representations, plans a course of action, and executes. It doesn’t just answer questions. It thinks.

And it can ask questions if it needs further information.

So far for the concept. Now to the findings.

Contrasting the Experience: Codex-5.2 vs Claude Sonnet 4.6

Here’s where I’ll be direct.

Codex-5.2 felt like a junior developer. Energetic, fast, willing to take a swing at anything. But the code it produced was hard to read, dense, inconsistent in style, and occasionally syntactically broken. It completed tasks, but the output didn’t feel reasoned. It felt generated. Over the course of the project, Codex generated a cost of $55, and I didn’t feel I made the progress I wanted.

More troublingly, the development experience felt frantic. Codex would make sweeping changes across files without communicating its intent clearly. I found myself spending time auditing its work rather than building on it.

For a system with real architectural complexity, multiple services, a memory layer, sensor orchestration, that audit overhead added up fast, and only compounded my cognitive fatigue.

Claude Sonnet felt like a senior developer. Slower in some ways, but more deliberate. The code it produced was cleaner and more consistent. When I asked it to build something non-trivial, the sensor layer, the memory architecture, the tool dispatcher, and especially the stream functionality of the agent session, a task that Codex failed at, it would often pause to reason about structure before diving into implementation.

In my opinion, the edits were more targeted. The refactors made sense. And the overall cost was 1/11th of Codex: $5. Of course, you can argue that I used Claude later in the project. But then here, more framework is already implemented, so every error that Codex did took longer to roll back.

And this is another insight that reflects something real about my efficiency, but also the SDLC. Codex generated more tokens to accomplish less. Claude’s outputs required fewer corrections and less review. For a project like this, where the architecture has to hold together across many moving parts, that difference compounds quickly. And not only in monetary terms.

Conclusion

The software development SDLC is changing. The part that required you to hold a large, complex system in your head and translate it, laboriously, into code is becoming automated. This means that in organizations, the importance of experience in senior developers diminishes. So in my opinion, what remains valuable is the synthesis: the ability to know what you’re building and why and use this to lead architectural instincts and product judgment,

From a functionality, I was impressed by what could be built. A system that can produce a full backtest report with graphs, tables, and everything. And that changes everything. Throughout 2025, most coding models were not good enough. But then December happened. And the gap between “almost” and “actually works” closed in a matter of weeks. Operationally, tasks I had been splitting into small chunks and painstakingly debugging (with prints and tests), the AI now zero-shots as entire modules with no bugs (well, almost - Codex produces some).

Probably, a year ago, I thought about agents as an augmentation of what I want to do. A convenience that can help me be more productive. Six months ago, I believed it was coming, but it would still be 12-18 months.

Today it’s here.

If the last time you tested coding capabilities was six months ago, your opinion has expired!

My artificial investment team is a small illustration of what becomes possible when the translation layer is cheap. I built fourteen analytical playbooks with real-time market sensors and a three-layer persistent memory within a few hours.

A reasoning loop that wakes up every morning already knowing what happened while I was asleep.

I built this…or didn’t I?

I was only describing what I wanted and iterated on what I got.

The programming language of the next iteration of software will be English.

The gap between Codex and Claude on this project was real and impactful. But this will close eventually.

The more important observation is that both are changing the ceiling on what one person can build. The question is no longer whether you can build a sophisticated system alone.

It’s whether you have a clear enough vision of what you want to build.

That part, at least, is still on you.